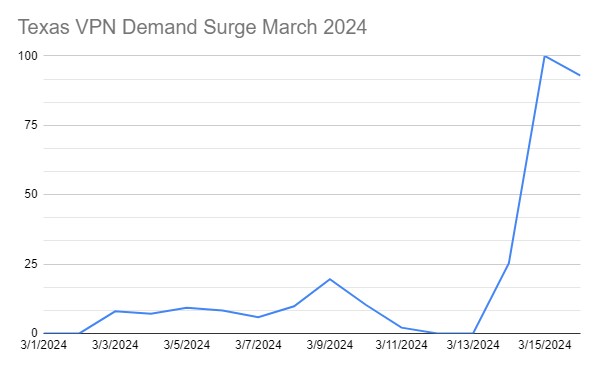

In this cross-sectional analysis of a nationally representative sample of 100 nonfederal acute care hospitals, 96.0% of hospital websites transmitted user information to third parties, whereas 71.0% of websites included a publicly accessible privacy policy. Of 71 privacy policies, 40 (56.3%) disclosed specific third-party companies receiving user information.

[…]

Of 100 hospital websites, 96 […] transferred user information to third parties. Privacy policies were found on 71 websites […] 70 […] addressed how collected information would be used, 66 […] addressed categories of third-party recipients of user information, and 40 […] named specific third-party companies or services receiving user information.

[…]

In this cross-sectional study of a nationally representative sample of 100 nonfederal acute care hospitals, we found that although 96.0% of hospital websites exposed users to third-party tracking, only 71.0% of websites had an available website privacy policy. Polices averaged more than 2500 words in length and were written at a college reading-level. Given estimates that more than one-half of adults in the US lack literacy proficiency and that the average patient in the US reads at a grade 8 level, the length and complexity of privacy policies likely pose substantial barriers to users’ ability to read and understand them.27,32

[…]

Only 56.3% of policies (and only 40 hospitals overall) identified specific third-party recipients. Named third-parties tended to be companies familiar to users, such as Google. This lack of detail regarding third-party data recipients may lead users to assume that they are being tracked only by a small number of companies that they know well, when, in fact, hospital websites included in this study transferred user data to a median of 9 domains.

[…]

In addition to presenting risks for users, inadequate privacy policies may pose risks for hospitals. Although hospitals are generally not required under federal law to have a website privacy policy that discloses their methods of collecting and transferring data from website visitors, hospitals that do publish website privacy policies may be subject to enforcement by regulatory authorities like the Federal Trade Commission (FTC).33 The FTC has taken the position that entities that publish privacy policies must ensure that these policies reflect their actual practices.34 For example, entities that promise they will delete personal information upon request but fail to do so in practice may be in violation of the FTC Act.34

[…]