combined Python and a hefty dose of of AI for a fascinating proof of concept: self-healing Python scripts. He shows things working in a video, embedded below the break, but we’ll also describe what happens right here.

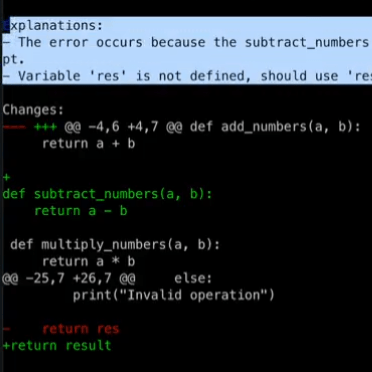

The demo Python script is a simple calculator that works from the command line, and [BioBootloader] introduces a few bugs to it. He misspells a variable used as a return value, and deletes the subtract_numbers(a, b) function entirely. Running this script by itself simply crashes, but using Wolverine on it has a very different outcome.In a short time, error messages are analyzed, changes proposed, those same changes applied, and the script re-run.

Wolverine is a wrapper that runs the buggy script, captures any error messages, then sends those errors to GPT-4 to ask it what it thinks went wrong with the code. In the demo, GPT-4 correctly identifies the two bugs (even though only one of them directly led to the crash) but that’s not all! Wolverine actually applies the proposed changes to the buggy script, and re-runs it. This time around there is still an error… because GPT-4’s previous changes included an out of scope return statement. No problem, because Wolverine once again consults with GPT-4, creates and formats a change, applies it, and re-runs the modified script. This time the script runs successfully and Wolverine’s work is done.

LLMs (Large Language Models) like GPT-4 are “programmed” in natural language, and these instructions are referred to as prompts. A large chunk of what Wolverine does is thanks to a carefully-written prompt, and you can read it here to gain some insight into the process. Don’t forget to watch the video demonstration just below if you want to see it all in action.

While AI coding capabilities definitely have their limitations, some of the questions it raises are becoming more urgent. Heck, consider that GPT-4 is barely even four weeks old at this writing.

https://platform.twitter.com/embed/Tweet.html?creatorScreenName=hackaday&dnt=true&embedId=twitter-widget-0&features=eyJ0ZndfdGltZWxpbmVfbGlzdCI6eyJidWNrZXQiOltdLCJ2ZXJzaW9uIjpudWxsfSwidGZ3X2ZvbGxvd2VyX2NvdW50X3N1bnNldCI6eyJidWNrZXQiOnRydWUsInZlcnNpb24iOm51bGx9LCJ0ZndfdHdlZXRfZWRpdF9iYWNrZW5kIjp7ImJ1Y2tldCI6Im9uIiwidmVyc2lvbiI6bnVsbH0sInRmd19yZWZzcmNfc2Vzc2lvbiI6eyJidWNrZXQiOiJvbiIsInZlcnNpb24iOm51bGx9LCJ0ZndfbWl4ZWRfbWVkaWFfMTU4OTciOnsiYnVja2V0IjoidHJlYXRtZW50IiwidmVyc2lvbiI6bnVsbH0sInRmd19leHBlcmltZW50c19jb29raWVfZXhwaXJhdGlvbiI6eyJidWNrZXQiOjEyMDk2MDAsInZlcnNpb24iOm51bGx9LCJ0ZndfZHVwbGljYXRlX3NjcmliZXNfdG9fc2V0dGluZ3MiOnsiYnVja2V0Ijoib24iLCJ2ZXJzaW9uIjpudWxsfSwidGZ3X3ZpZGVvX2hsc19keW5hbWljX21hbmlmZXN0c18xNTA4MiI6eyJidWNrZXQiOiJ0cnVlX2JpdHJhdGUiLCJ2ZXJzaW9uIjpudWxsfSwidGZ3X2xlZ2FjeV90aW1lbGluZV9zdW5zZXQiOnsiYnVja2V0Ijp0cnVlLCJ2ZXJzaW9uIjpudWxsfSwidGZ3X3R3ZWV0X2VkaXRfZnJvbnRlbmQiOnsiYnVja2V0Ijoib24iLCJ2ZXJzaW9uIjpudWxsfX0%3D&frame=false&hideCard=false&hideThread=false&id=1636880208304431104&lang=en&origin=https%3A%2F%2Fhackaday.com%2F2023%2F04%2F09%2Fwolverine-gives-your-python-scripts-the-ability-to-self-heal%2F&sessionId=de39ae5f7a5963d32185e4edfa3b5d86374d2d37&siteScreenName=hackaday&theme=light&widgetsVersion=aaf4084522e3a%3A1674595607486&width=550px

https://hackaday.com/2023/04/09/wolverine-gives-your-python-scripts-the-ability-to-self-heal/