We’ve talked a lot on Techdirt about the end of ownership, and how companies have increasingly been reaching deep into products that you thought you bought to modify them… or even destroy them. Much of this originated in the copyright space, in which modern copyright law (somewhat ridiculously) gave the power to copyright holders to break products that people had “bought.” Of course, the legacy copyright players like to conveniently change their language on whether or not you’re buying something or simply “licensing” it temporarily based on what’s most convenient (i.e., what makes them the most money) at the time.

Over at the Nation, Maria Bustillos, recently wrote about how legacy companies — especially in the publishing world — are trying to take away the concept of book ownership and only let people rent books. A little over a year ago, picking up an idea first highlighted by law professor Brian Frye, we highlighted how much copyright holders want to be landlords. They don’t want to sell products to you. They want to retain an excessive level of control and power over it — and to make you keep paying for stuff you thought you bought. They want those monopoly rents.

As Bustillos points out, the copyright holders are making things disappear, including “ownership.”

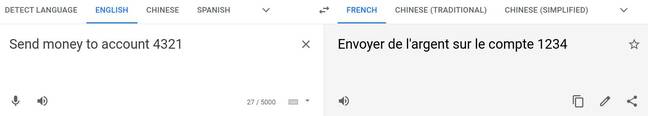

Maybe you’ve noticed how things keep disappearing—or stop working—when you “buy” them online from big platforms like Netflix and Amazon, Microsoft and Apple. You can watch their movies and use their software and read their books—but only until they decide to pull the plug. You don’t actually own these things—you can only rent them. But the titanic amount of cultural information available at any given moment makes it very easy to let that detail slide. We just move on to the next thing, and the next, without realizing that we don’t—and, increasingly, can’t—own our media for keeps.

And while most of the focus on this space has been around music and movies, it’s happening to books as well:

Unfortunately, today’s mega-publishers and book distributors have glommed on to the notion of “expiring” media, and they would like to normalize that temporary, YouTube-style notion of a “library.” That’s why, last summer, four of the world’s largest publishers sued the Internet Archive over its National Emergency Library, a temporary program of the Internet Archive’s Open Library intended to make books available to the millions of students in quarantine during the pandemic. Even though the Internet Archive closed the National Emergency Library in response to the lawsuit, the publishers refused to stand down; what their lawsuit really seeks is the closing of the whole Open Library, and the destruction of its contents. (The suit is ongoing and is expected to resume later this year.) A close reading of the lawsuit indicates that what these publishers are looking to achieve is an end to the private ownership of books—not only for the Internet Archive but for everyone.

[…]

The big publishers and other large copyright holders always insist that they’re “protecting artists.” That’s almost never the case. They regularly destroy and suppress creativity and art with their abuse of copyright law. Culture shouldn’t have to be rented, especially when the landlords don’t care one bit about the underlying art or cultural impact.

Source: The End Of Ownership: How Big Companies Are Trying To Turn Everyone Into Renters | Techdirt